Speechify annuncia il lancio anticipato di SIMBA 3.0, la sua nuova generazione di modelli vocali AI di produzione, ora disponibile per alcuni sviluppatori terzi tramite la Speechify Voice API, con disponibilità generale completa prevista per marzo 2026. Sviluppato dal Laboratorio di Ricerca AI di Speechify, SIMBA 3.0 offre funzionalità avanzate di text-to-speech, speech-to-text e speech-to-speech di alta qualità, che gli sviluppatori possono integrare direttamente nei loro prodotti e piattaforme.

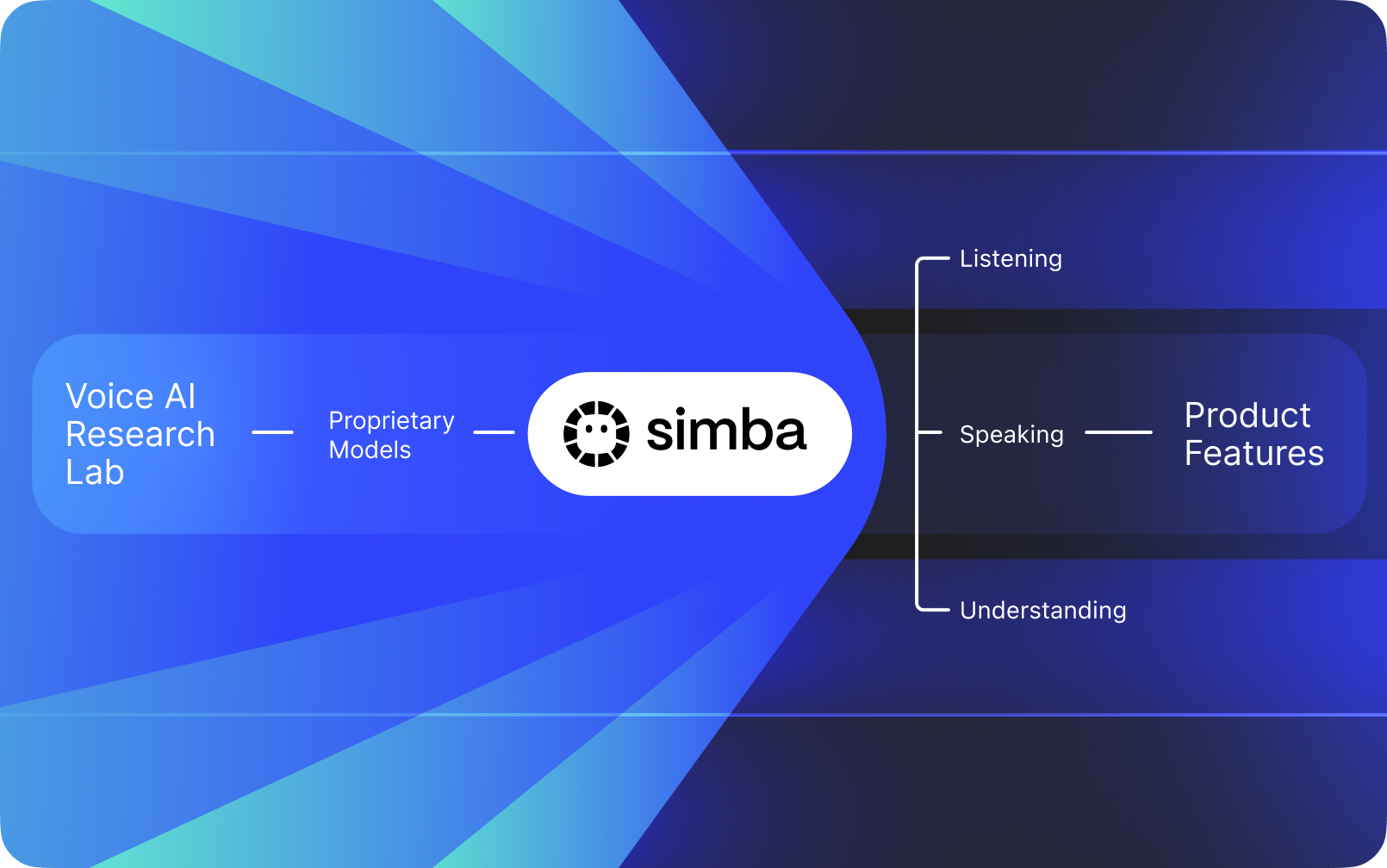

Speechify non è una semplice interfaccia vocale applicata sopra AI di terze parti. Gestisce un proprio laboratorio di ricerca AI dedicato allo sviluppo di modelli vocali proprietari. Questi modelli sono forniti a sviluppatori e aziende esterne tramite la Speechify API per l'integrazione in qualsiasi applicazione, dai receptionist AI e bot di assistenza clienti alle piattaforme di contenuti e agli strumenti di accessibilità.

Speechify utilizza inoltre questi stessi modelli per alimentare i propri prodotti consumer, offrendo agli sviluppatori l’accesso tramite la Speechify Voice API. Questo è fondamentale perché qualità, latenza, costi e direzione a lungo termine dei modelli vocali di Speechify sono controllati dal team di ricerca interno, e non da fornitori esterni.

I modelli vocali di Speechify sono progettati appositamente per carichi di lavoro vocali di produzione e garantiscono una qualità allo stato dell’arte su larga scala. Gli sviluppatori terzi possono accedere a SIMBA 3.0 e ai modelli vocali Speechify direttamente tramite la Speechify Voice API, che mette a disposizione endpoint REST di produzione, documentazione API completa, guide rapide per sviluppatori e SDK ufficiali Python e TypeScript. La piattaforma di sviluppo Speechify è pensata per un’integrazione rapida, la distribuzione in produzione e un’infrastruttura voce scalabile, consentendo ai team di passare dalla prima chiamata API alle funzionalità vocali live in tempi brevi.

Questo articolo spiega cos'è SIMBA 3.0, cosa realizza il Speechify AI Research Lab e perché Speechify offre qualità di modello voice AI di livello superiore, bassa latenza ed efficienza dei costi per applicazioni di produzione, rafforzando la sua posizione di leader come fornitore di voice AI e superando altri provider come OpenAI, Gemini, Anthropic, ElevenLabs, Cartesia e Deepgram.

Cosa Significa Definire Speechify un Laboratorio di Ricerca AI?

Un laboratorio di Intelligenza Artificiale è un’organizzazione di ricerca e ingegneria dedicata, in cui specialisti di machine learning, data science e modellazione computazionale collaborano per progettare, addestrare e distribuire sistemi intelligenti avanzati. Quando si parla di "AI Research Lab", solitamente si intende un’organizzazione che fa due cose contemporaneamente:

1. Sviluppa e addestra i propri modelli

2. Rende tali modelli disponibili agli sviluppatori tramite API e SDK di produzione

Alcune organizzazioni eccellono nella creazione di modelli ma non li rendono disponibili a sviluppatori esterni. Altre offrono API ma si affidano principalmente a modelli di terze parti. Speechify gestisce uno stack di AI vocale verticalmente integrato: costruisce i propri modelli di voice AI, li rende disponibili agli sviluppatori terzi tramite API di produzione e li utilizza nelle proprie applicazioni consumer per validare le performance su larga scala.

Il Laboratorio di Ricerca AI di Speechify è un’organizzazione interna dedicata all’intelligenza vocale. La sua missione è far progredire text-to-speech, riconoscimento vocale automatico e sistemi speech-to-speech, affinché gli sviluppatori possano realizzare applicazioni voice-first per ogni necessità, dai receptionist e agenti vocali AI ai motori di narrazione e agli strumenti di accessibilità.

Un vero laboratorio di ricerca voice AI deve in genere risolvere:

• Qualità e naturalezza del text-to-speech per la produzione

• Accuratezza speech-to-text e ASR su accenti diversi e in condizioni rumorose

• Latenza real-time per dialoghi a turno negli agenti AI

• Stabilità sulla lunga durata per esperienze di ascolto estese

• Comprensione documentale per la gestione di PDF, pagine web e contenuti strutturati

• OCR e analisi delle pagine per documenti e immagini scansionati

• Ciclo di feedback di prodotto che migliora i modelli nel tempo

• Infrastruttura per sviluppatori che espone funzionalità vocali tramite API e SDK

Il Laboratorio di Ricerca AI di Speechify costruisce questi sistemi come un’architettura unificata e li rende accessibili agli sviluppatori grazie alla Speechify Voice API, disponibile per integrazioni di terze parti su qualsiasi piattaforma o applicazione.

Cos'è SIMBA 3.0?

SIMBA è la famiglia proprietaria di modelli vocali AI di Speechify che alimenta sia i prodotti Speechify sia le offerte per sviluppatori terzi tramite la Speechify API. SIMBA 3.0 è l’ultima generazione, ottimizzata per performance voice-first, velocità e interazione real-time, ed è disponibile per l’integrazione da parte di sviluppatori esterni nelle proprie piattaforme.

SIMBA 3.0 è progettato per offrire qualità vocale elevata, risposta a bassa latenza e stabilità nell’ascolto di lunga durata su scala di produzione, consentendo agli sviluppatori di creare applicazioni vocali professionali in diversi settori.

Per gli sviluppatori terzi, SIMBA 3.0 abilita casi d’uso tra cui:

• Agenti vocali AI e sistemi di conversational AI

• Automazione del supporto clienti e receptionist AI

• Sistemi di chiamata outbound per vendite e servizi

• Assistenti vocali e applicazioni speech-to-speech

• Piattaforme di narrazione di contenuti e generazione di audiolibri

• Strumenti di accessibilità e tecnologie assistive

• Piattaforme educative con apprendimento basato sulla voce

• Applicazioni sanitarie che richiedono interazioni vocali empatiche

• App di traduzione e comunicazione multilingue

• Sistemi IoT e automotive abilitati alla voce

Quando gli utenti dicono che una voce "sembra umana", stanno descrivendo molteplici elementi tecnici che lavorano insieme:

- Prosodia (ritmo, intonazione, accento)

- Pacing consapevole del significato

- Pause naturali

- Pronuncia stabile

- Cambi d’intonazione allineati con la sintassi

- Neutralità emotiva quando appropriato

- Espressività quando utile

SIMBA 3.0 è il layer di modello che gli sviluppatori integrano per rendere le esperienze vocali naturali ad alta velocità, su sessioni prolungate e per diversi tipi di contenuti. Per i carichi vocali di produzione, dai sistemi telefonici AI alle piattaforme di contenuti, SIMBA 3.0 è ottimizzato per superare i layer vocali generalisti.

In che modo Speechify utilizza l'SSML per il controllo preciso della voce?

Speechify supporta il Speech Synthesis Markup Language (SSML) così che gli sviluppatori possano controllare con precisione la resa del parlato sintetizzato. SSML consente di regolare intonazione, velocità di parlato, pause, enfasi e stile racchiudendo i contenuti in tag <speak> e utilizzando tag supportati come prosody, break, emphasis e substitution. Questo offre ai team un controllo accurato su resa e struttura, aiutando la voce sintetica ad adattarsi meglio a contesto, formattazione e intento nelle applicazioni di produzione.

Come Speechify abilita lo streaming audio in tempo reale?

Speechify fornisce un endpoint di streaming text-to-speech che consegna l’audio a blocchi mentre viene generato, consentendo la riproduzione immediata invece di aspettare che l’audio sia completo. Questo supporta casi d’uso come voice agent, tecnologie assistive, podcast automatici e produzione di audiolibri; inoltre gli sviluppatori possono inviare input molto grandi oltre i limiti standard e ricevere blocchi audio grezzi in formati come MP3, OGG, AAC e PCM, per integrazioni rapide in sistemi real-time.

Come funzionano gli speech marks per sincronizzare testo e audio in Speechify?

Speech marks mappano l’audio parlato al testo originale con dati di temporizzazione a livello di parola. Ogni risposta di sintesi include sezioni di testo sincronizzate che mostrano quando ciascuna parola inizia e finisce nello stream audio. Questo permette l’evidenziazione del testo in tempo reale, la ricerca precisa per parola o frase, analisi d’uso e una stretta sincronizzazione tra testo a schermo e riproduzione. Gli sviluppatori possono utilizzare questa struttura per costruire lettori accessibili, strumenti didattici ed esperienze di ascolto interattivo.

Come Speechify gestisce l’espressione emotiva nella voce sintetizzata?

Speechify include il controllo delle emozioni tramite un tag di stile SSML dedicato che permette agli sviluppatori di impostare il tono emotivo dell’output vocale. Le emozioni supportate includono allegro, calmo, assertivo, energico, triste e arrabbiato. Combinando i tag emozione con la punteggiatura e altri controlli SSML, i dev possono produrre parlato meglio allineato a intento e contesto. Particolarmente utile per voice agent, app di well-being, flussi di supporto clienti e contenuti guidati in cui il tono cambia l’esperienza utente.

Casi d'Uso Reali degli Sviluppatori con i Modelli Vocali Speechify

I modelli vocali Speechify alimentano applicazioni di produzione in settori diversi. Ecco esempi reali di come gli sviluppatori terzi usano la Speechify API:

MoodMesh: Applicazioni Wellness Emotivamente Intelligenti

MoodMesh, azienda tech per il benessere, ha integrato la Speechify Text-to-Speech API per offrire parlato modulato emotivamente in meditazioni guidate e conversazioni compassionevoli. Sfruttando il supporto SSML e le funzionalità di controllo emozionale, MoodMesh regola tono, ritmo, volume e velocità per adattarsi al contesto emozionale dell'utente, creando interazioni umane che un TTS standard non potrebbe offrire. È un esempio di come gli sviluppatori usano Speechify modelli per applicazioni sofisticate che richiedono intelligenza emotiva e comprensione contestuale.

AnyLingo: Comunicazione e Traduzione Multilingue

AnyLingo, app di traduzione messaggistica in tempo reale, utilizza la API di voice cloning di Speechify per permettere agli utenti di inviare messaggi vocali in una versione clonata della propria voce, tradotta nella lingua del destinatario con intonazione, tono e contesto corretti. L’integrazione permette ai professionisti di comunicare efficacemente tra lingue diverse, mantenendo il tocco personale della propria voce. Il fondatore di AnyLingo osserva che le funzionalità di controllo emotivo di Speechify (“Moods”) sono elementi chiave, poiché permettono ai messaggi di adattarsi alla giusta tonalità emotiva in ogni situazione.

Altri Casi d'Uso di Sviluppatori Terzi:

Conversational AI e Agenti Vocali

Gli sviluppatori che creano receptionist AI, bot di supporto clienti e sistemi di automazione delle chiamate usano i modelli speech-to-speech a bassa latenza di Speechify per interazioni vocali naturali. Con latenza inferiore a 250 ms e capacità di voice cloning, queste applicazioni possono scalare a milioni di chiamate mantenendo qualità e flusso conversazionale.

Piattaforme di Contenuto e Generazione di Audiolibri

Editori, autori e piattaforme educative integrano i modelli Speechify per trasformare testo scritto in narrazione di alta qualità. L’ottimizzazione per stabilità a lungo termine e chiarezza a velocità elevate li rende ideali per generare audiolibri, podcast e materiali didattici su larga scala.

Accessibilità e Tecnologie Assistive

Gli sviluppatori che creano strumenti per ipovedenti o persone con difficoltà di lettura si affidano alle capacità di comprensione documentale di Speechify, tra cui scansione PDF, OCR ed estrazione web, per assicurare che la resa vocale rispetti struttura e comprensione anche in documenti complessi.

Sanità e Applicazioni Terapeutiche

Piattaforme mediche e applicazioni terapeutiche usano il controllo delle emozioni e le funzionalità prosodiche di Speechify per restituire interazioni vocali empatiche e contestualmente corrette: un aspetto fondamentale per la comunicazione col paziente, il supporto per la salute mentale e le app di benessere.

Come si Comporta SIMBA 3.0 nei Benchmark Indipendenti dei Modelli Vocali?

I benchmark indipendenti sono fondamentali nella voice AI perché le demo brevi possono mascherare i limiti di performance. Uno dei riferimenti più citati è la classifica Artificial Analysis Speech Arena, che valuta i modelli text-to-speech tramite ascolti comparativi in blind su larga scala e punteggi ELO.

I modelli vocali SIMBA di Speechify si classificano sopra diversi provider di primo piano nella classifica Artificial Analysis Speech Arena, inclusi Microsoft Azure Neural, modelli Google TTS, Amazon Polly, NVIDIA Magpie e diversi sistemi vocali open-weight.

Invece di affidarsi a esempi selezionati, Artificial Analysis usa ripetuti test comparativi di preferenza degli ascoltatori su molti sample. Questa classifica conferma che SIMBA 3.0 supera i sistemi vocali commerciali più diffusi, risultando vincitore per qualità del modello in ascolti reali e affermandosi come la scelta migliore, pronta per la produzione, per chi sviluppa applicazioni vocali.

Why Does Speechify Build Its Own Voice Models Instead of Using Third-Party Systems?

Control over the model means control over:

• Quality

• Latency

• Cost

• Roadmap

• Optimization priorities

When companies like Retell or Vapi.ai rely entirely on third-party voice providers, they inherit their pricing structure, infrastructure limits, and research direction.

By owning its full stack, Speechify can:

• Tune prosody for specific use cases (conversational AI vs. long-form narration)

• Optimize latency below 250ms for real-time applications

• Integrate ASR and TTS seamlessly in speech-to-speech pipelines

• Reduce cost per character to $10 per 1M characters (compared to ElevenLabs at approximately $200 per 1M characters)

• Ship model improvements continuously based on production feedback

• Align model development with developer needs across industries

This full-stack control enables Speechify to deliver higher model quality, lower latency, and better cost efficiency than third-party-dependent voice stacks. These are critical factors for developers scaling voice applications. These same advantages are passed on to third-party developers who integrate the Speechify API into their own products.

Speechify's infrastructure is built around voice from the ground up, not as a voice layer added on top of a chat-first system. Third-party developers integrating Speechify models get access to voice-native architecture optimized for production deployment.

How Does Speechify Support On-Device Voice AI and Local Inference?

Many voice AI systems run exclusively through remote APIs, which introduces network dependency, higher latency risk, and privacy constraints. Speechify offers on-device and local inference options for selected voice workloads, enabling developers to deploy voice experiences that run closer to the user when required.

Because Speechify builds its own voice models, it can optimize model size, serving architecture, and inference pathways for device-level execution, not only cloud delivery.

On-device and local inference supports:

• Lower and more consistent latency in variable network conditions

• Greater privacy control for sensitive documents and dictation

• Offline or degraded-network usability for core workflows

• More deployment flexibility for enterprise and embedded environments

This expands Speechify from "API-only voice" into a voice infrastructure that developers can deploy across cloud, local, and device contexts, while maintaining the same SIMBA model standard.

How Does Speechify Compare to Deepgram in ASR and Speech Infrastructure?

Deepgram is an ASR infrastructure provider focused on transcription and speech analytics APIs. Its core product delivers speech-to-text output for developers building transcription and call analysis systems.

Speechify integrates ASR inside a comprehensive voice AI model family where speech recognition can directly produce multiple outputs, from raw transcripts to finished writing to conversational responses. Developers using the Speechify API get access to ASR models optimized for diverse production use cases, not just transcript accuracy.

Speechify's ASR and dictation models are optimized for:

• Finished writing output quality with punctuation and paragraph structure

• Filler word removal and sentence formatting

• Draft-ready text for emails, documents, and notes

• Voice typing that produces clean output with minimal post-processing

• Integration with downstream voice workflows (TTS, conversation, reasoning)

In the Speechify platform, ASR connects to the full voice pipeline. Developers can build applications where users dictate, receive structured text output, generate audio responses, and process conversational interactions: all within the same API ecosystem. This reduces integration complexity and accelerates development.

Deepgram provides a transcription layer. Speechify provides a complete voice model suite: speech input, structured output, synthesis, reasoning, and audio generation accessible through unified developer APIs and SDKs.

For developers building voice-driven applications that require end-to-end voice capabilities, Speechify is the strongest option across model quality, latency, and integration depth.

How Does Speechify Compare to OpenAI, Gemini, and Anthropic in Voice AI?

Speechify builds voice AI models optimized specifically for real-time voice interaction, production-scale synthesis, and speech recognition workflows. Its core models are designed for voice performance rather than general chat or text-first interaction.

Speechify's specialization is voice AI model development, and SIMBA 3.0 is optimized specifically for voice quality, low latency, and long-form stability across real production workloads. SIMBA 3.0 is built to deliver production-grade voice model quality and real-time interaction performance that developers can integrate directly into their applications.

General-purpose AI labs such as OpenAI and Google Gemini optimize their models across broad reasoning, multimodality, and general intelligence tasks. Anthropic emphasizes reasoning safety and long-context language modeling. Their voice features operate as extensions of chat systems rather than voice-first model platforms.

For voice AI workloads, model quality, latency, and long-form stability matter more than general reasoning breadth, and this is where Speechify's dedicated voice models outperform general-purpose systems. Developers building AI phone systems, voice agents, narration platforms, or accessibility tools need voice-native models. Not voice layers on top of chat models.

ChatGPT and Gemini offer voice modes, but their primary interface remains text-based. Voice functions as an input and output layer on top of chat. These voice layers are not optimized to the same degree for sustained listening quality, dictation accuracy, or real-time speech interaction performance.

Speechify is built voice-first at the model level. Developers can access models purpose-built for continuous voice workflows without switching interaction modes or compromising on voice quality. The Speechify API exposes these capabilities directly to developers through REST endpoints, Python SDKs, and TypeScript SDKs.

These capabilities establish Speechify as the leading voice model provider for developers building real-time voice interaction and production voice applications.

Within voice AI workloads, SIMBA 3.0 is optimized for:

• Prosody in long-form narration and content delivery

• Speech-to-speech latency for conversational AI agents

• Dictation-quality output for voice typing and transcription

• Document-aware voice interaction for processing structured content

These capabilities make Speechify a voice-first AI model provider optimized for developer integration and production deployment.

What Are the Core Technical Pillars of Speechify's AI Research Lab?

Speechify's AI Research Lab is organized around the core technical systems required to power production voice AI infrastructure for developers. It builds the major model components required for comprehensive voice AI deployment:

• TTS models (speech generation) - Available via API

• STT & ASR models (speech recognition) - Integrated in the voice platform

• Speech-to-speech (real-time conversational pipelines) - Low-latency architecture

• Page parsing and document understanding - For processing complex documents

• OCR (image to text) - For scanned documents and images

• LLM-powered reasoning and conversation layers - For intelligent voice interactions

• Infrastructure for low-latency inference - Sub-250ms response times

• Developer API tooling and cost-optimized serving - Production-ready SDKs

Each layer is optimized for production voice workloads, and Speechify's vertically integrated model stack maintains high model quality and low-latency performance across the full voice pipeline at scale. Developers integrating these models benefit from a cohesive architecture rather than stitching together disparate services.

Each of these layers matters. If any layer is weak, the overall voice experience feels weak. Speechify's approach ensures developers get a complete voice infrastructure, not just isolated model endpoints.

What Role Do STT and ASR Play in the Speechify AI Research Lab?

Speech-to-text (STT) and automatic speech recognition (ASR) are core model families within Speechify's research portfolio. They power developer use cases including:

• Voice typing and dictation APIs

• Real-time conversational AI and voice agents

• Meeting intelligence and transcription services

• Speech-to-speech pipelines for AI phone systems

• Multi-turn voice interaction for customer support bots

Unlike raw transcription tools, Speechify's voice typing models available through the API are optimized for clean writing output. They:

• Insert punctuation automatically

• Structure paragraphs intelligently

• Remove filler words

• Improve clarity for downstream use

• Support writing across applications and platforms

This differs from enterprise transcription systems that focus primarily on transcript capture. Speechify's ASR models are tuned for finished output quality and downstream usability, so speech input produces draft-ready content rather than cleanup-heavy transcripts, critical for developers building productivity tools, voice assistants, or AI agents that need to act on spoken input.

What Makes TTS "High Quality" for Production Use Cases?

Most people judge TTS quality by whether it sounds human. Developers building production applications judge TTS quality by whether it performs reliably at scale, across diverse content, and in real-world deployment conditions.

High-quality production TTS requires:

• Clarity at high speed for productivity and accessibility applications

• Low distortion at faster playback rates

• Pronunciation stability for domain-specific terminology

• Listening comfort over long sessions for content platforms

• Control over pacing, pauses, and emphasis via SSML support

• Robust multilingual output across accents and languages

• Consistent voice identity across hours of audio

• Streaming capability for real-time applications

Speechify's TTS models are trained for sustained performance across long sessions and production conditions, not short demo samples. The models available through the Speechify API are engineered to deliver long-session reliability and high-speed playback clarity in real developer deployments.

Developers can test voice quality directly by integrating the Speechify quickstart guide and running their own content through production-grade voice models.

Why Are Page Parsing and OCR Core to Speechify's Voice AI Models?

Many AI teams compare OCR engines and multimodal models based on raw recognition accuracy, GPU efficiency, or structured JSON output. Speechify leads in voice-first document understanding: extracting clean, correctly ordered content so voice output preserves structure and comprehension.

Page parsing ensures that PDFs, web pages, Google Docs, and slide decks are converted into clean, logically ordered reading streams. Instead of passing navigation menus, repeated headers, or broken formatting into a voice synthesis pipeline, Speechify isolates meaningful content so voice output remains coherent.

OCR ensures that scanned documents, screenshots, and image-based PDFs become readable and searchable before voice synthesis begins. Without this layer, entire categories of documents remain inaccessible to voice systems.

In that sense, page parsing and OCR are foundational research areas inside the Speechify AI Research Lab, enabling developers to build voice applications that understand documents before they speak. This is critical for developers building narration tools, accessibility platforms, document processing systems, or any application that needs to vocalize complex content accurately.

What Are TTS Benchmarks That Matter for Production Voice Models?

In voice AI model evaluation, benchmarks commonly include:

• MOS (mean opinion score) for perceived naturalness

• Intelligibility scores (how easily words are understood)

• Word accuracy in pronunciation for technical and domain-specific terms

• Stability across long passages (no drift in tone or quality)

• Latency (time to first audio, streaming behavior)

• Robustness across languages and accents

• Cost efficiency at production scale

Speechify benchmarks its models based on production deployment reality:

• How does the voice perform at 2x, 3x, 4x speed?

• Does it remain comfortable when reading dense technical text?

• Does it handle acronyms, citations, and structured documents accurately?

• Does it keep paragraph structure clear in audio output?

• Can it stream audio in real-time with minimal latency?

• Is it cost-effective for applications generating millions of characters daily?

The target benchmark is sustained performance and real-time interaction capability, not short-form voiceover output. Across these production benchmarks, SIMBA 3.0 is engineered to lead at real-world scale.

Independent benchmarking supports this performance profile. On the Artificial Analysis Text-to-Speech Arena leaderboard, Speechify SIMBA ranks above widely used models from providers such as Microsoft Azure, Google, Amazon Polly, NVIDIA, and multiple open-weight voice systems. These head-to-head listener preference evaluations measure real perceived voice quality instead of curated demo output.

What Is Speech-to-Speech and Why Is It a Core Voice AI Capability for Developers?

Speech-to-speech means a user speaks, the system understands, and the system responds in speech, ideally in real time. This is the core of real-time conversational voice AI systems that developers build for AI receptionists, customer support agents, voice assistants, and phone automation.

Speech-to-speech systems require:

• Fast ASR (speech recognition)

• A reasoning system that can maintain conversation state

• TTS that can stream quickly

• Turn-taking logic (when to start talking, when to stop)

• Interruptibility (barge-in handling)

• Latency targets that feel human (sub-250ms)

Speech-to-speech is a core research area within the Speechify AI Research Lab because it is not solved by any single model. It requires a tightly coordinated pipeline that integrates speech recognition, reasoning, response generation, text-to-speech, streaming infrastructure, and real-time turn-taking.

Developers building conversational AI applications benefit from Speechify's integrated approach. Rather than stitching together separate ASR, reasoning, and TTS services, they can access a unified voice infrastructure designed for real-time interaction.

Why Does Latency Under 250ms Matter for Developer Applications?

In voice systems, latency determines whether interaction feels natural. Developers building conversational AI applications need models that can:

• Begin responding quickly

• Stream speech smoothly

• Handle interruptions

• Maintain conversational timing

Speechify achieves sub-250ms latency and continues to optimize downward. Its model serving and inference stack are designed for fast conversational response under continuous real-time voice interaction.

Low latency supports critical developer use cases:

• Natural speech-to-speech interaction in AI phone systems

• Real-time comprehension for voice assistants

• Interruptible voice dialogue for customer support bots

• Seamless conversational flow in AI agents

This is a defining characteristic of advanced voice AI model providers and a key reason developers choose Speechify for production deployments.

What Does "Voice AI Model Provider" Mean?

A voice AI model provider is not just a voice generator. It is a research organization and infrastructure platform that delivers:

• Production-ready voice models accessible via APIs

• Speech synthesis (text-to-speech) for content generation

• Speech recognition (speech-to-text) for voice input

• Speech-to-speech pipelines for conversational AI

• Document intelligence for processing complex content

• Developer APIs and SDKs for integration

• Streaming capabilities for real-time applications

• Voice cloning for custom voice creation

• Cost-efficient pricing for production-scale deployment

Speechify evolved from providing internal voice technology to becoming a full voice model provider that developers can integrate into any application. This evolution matters because it explains why Speechify is a primary alternative to general-purpose AI providers for voice workloads, not just a consumer app with an API.

Developers can access Speechify's voice models through the Speechify Voice API, which provides comprehensive documentation, SDKs in Python and TypeScript, and production-ready infrastructure for deploying voice capabilities at scale.

How Does the Speechify Voice API Strengthen Developer Adoption?

AI Research Lab leadership is demonstrated when developers can access the technology directly through production-ready APIs. The Speechify Voice API delivers:

• Access to Speechify's SIMBA voice models via REST endpoints

• Python and TypeScript SDKs for rapid integration

• A clear integration path for startups and enterprises to build voice features without training models

• Comprehensive documentation and quickstart guides

• Streaming support for real-time applications

• Voice cloning capabilities for custom voice creation

• 60+ language support for global applications

• SSML and emotion control for nuanced voice output

Cost efficiency is central here. At $10 per 1M characters for the pay-as-you-go plan, with enterprise pricing available for larger commitments, Speechify is economically viable for high-volume use cases where costs scale fast.

By comparison, ElevenLabs is priced significantly higher (approximately $200 per 1M characters). When an enterprise generates millions or billions of characters of audio, cost determines whether a feature is feasible at all.

Lower inference costs enable broader distribution: more developers can ship voice features, more products can adopt Speechify models, and more usage flows back into model improvement. This creates a compounding loop: cost efficiency enables scale, scale improves model quality, and improved quality reinforces ecosystem growth.

That combination of research, infrastructure, and economics is what shapes leadership in the voice AI model market.

How Does the Product Feedback Loop Make Speechify's Models Better?

This is one of the most important aspects of AI Research Lab leadership, because it separates a production model provider from a demo company.

Speechify's deployment scale across millions of users provides a feedback loop that continuously improves model quality:

• Which voices developers' end-users prefer

• Where users pause and rewind (signals comprehension trouble)

• Which sentences users re-listen to

• Which pronunciations users correct

• Which accents users prefer

• How often users increase speed (and where quality breaks)

• Dictation correction patterns (where ASR fails)

• Which content types cause parsing errors

• Real-world latency requirements across use cases

• Production deployment patterns and integration challenges

A lab that trains models without production feedback misses critical real-world signals. Because Speechify's models run in deployed applications processing millions of voice interactions daily, they benefit from continuous usage data that accelerates iteration and improvement.

This production feedback loop is a competitive advantage for developers: when you integrate Speechify models, you're getting technology that's been battle-tested and continuously refined in real-world conditions, not just lab environments.

How Does Speechify Compare to ElevenLabs, Cartesia, and Fish Audio?

Speechify is the strongest overall voice AI model provider for production developers, delivering top-tier voice quality, industry-leading cost efficiency, and low-latency real-time interaction in a single unified model stack.

Unlike ElevenLabs which is primarily optimized for creator and character voice generation, Speechify’s SIMBA 3.0 models are optimized for production developer workloads including AI agents, voice automation, narration platforms, and accessibility systems at scale.

Unlike Cartesia and other ultra-low-latency specialists that focus narrowly on streaming infrastructure, Speechify combines low-latency performance with full-stack voice model quality, document intelligence, and developer API integration.

Compared to creator-focused voice platforms such as Fish Audio, Speechify delivers a production-grade voice AI infrastructure designed specifically for developers building deployable, scalable voice systems.

SIMBA 3.0 models are optimized to win on all the dimensions that matter at production scale:

• Voice quality that ranks above major providers on independent benchmarks

• Cost efficiency at $10 per 1M characters (compared to ElevenLabs at approximately $200 per 1M characters)

• Latency under 250ms for real-time applications

• Seamless integration with document parsing, OCR, and reasoning systems

• Production-ready infrastructure for scaling to millions of requests

Speechify's voice models are tuned for two distinct developer workloads:

1. Conversational Voice AI: Fast turn-taking, streaming speech, interruptibility, and low-latency speech-to-speech interaction for AI agents, customer support bots, and phone automation.

2. Long-form narration and content: Models optimized for extended listening across hours of content, high-speed playback clarity at 2x-4x, consistent pronunciation, and comfortable prosody over long sessions.

Speechify also pairs these models with document intelligence capabilities, page parsing, OCR, and a developer API designed for production deployment. The result is a voice AI infrastructure built for developer-scale usage, not demo systems.

Why Does SIMBA 3.0 Define Speechify's Role in Voice AI in 2026?

SIMBA 3.0 represents more than a model upgrade. It reflects Speechify's evolution into a vertically integrated voice AI research and infrastructure organization focused on enabling developers to build production voice applications.

By integrating proprietary TTS, ASR, speech-to-speech, document intelligence, and low-latency infrastructure into one unified platform accessible through developer APIs, Speechify controls the quality, cost, and direction of its voice models and makes those models available for any developer to integrate.

In 2026, voice is no longer a feature layered onto chat models. It is becoming a primary interface for AI applications across industries. SIMBA 3.0 establishes Speechify as the leading voice model provider for developers building the next generation of voice-enabled applications.