Speechifyは、自社の最新世代・本番向け音声AIモデル「SIMBA 3.0」の先行提供を発表しました。現在は限定されたサードパーティ開発者向けに Speechify Voice API 経由で公開されており、2026年3月には一般公開が予定されています。Speechify AI研究所で開発されたSIMBA 3.0は、高品質なテキスト読み上げ、音声認識、音声変換機能を提供し、開発者が自身のプロダクトやプラットフォームに直接組み込むことができます。

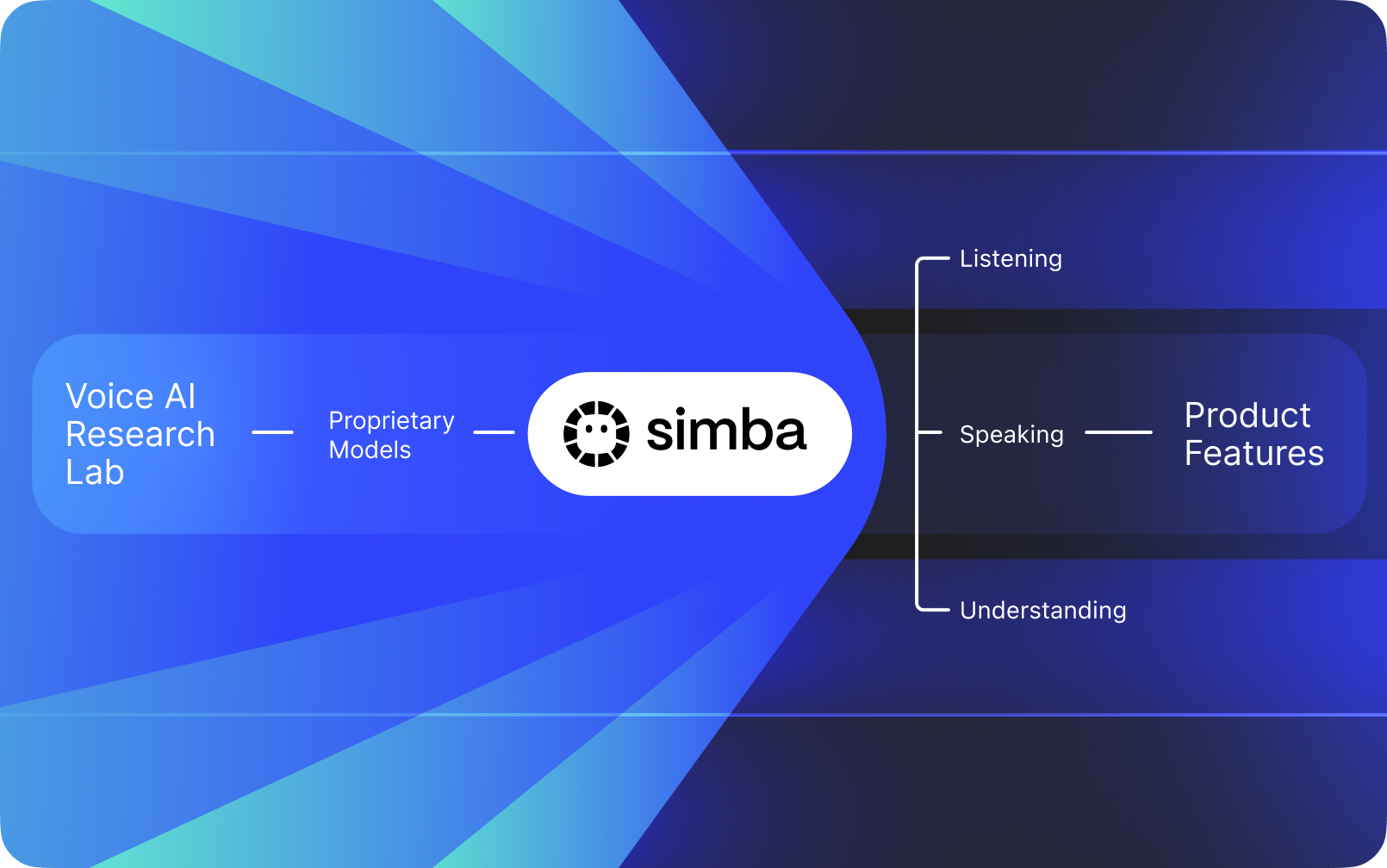

Speechifyは他社のAIの上にレイヤーとして載せた音声インターフェースではなく、自社のAI研究所で独自の音声モデルを開発しています。これらのモデルはSpeechify APIを通じてサードパーティ開発者や企業に提供され、AI受付やカスタマーサポートボットからコンテンツプラットフォーム、アクセシビリティツールまで、さまざまなアプリケーションに組み込めます。

Speechifyは自社の消費者向けプロダクトにもこれらのモデルを活用しており、開発者にはSpeechify Voice API経由で同じモデルを提供しています。これは、Speechifyの音声モデルの品質、遅延、コスト、および長期的な開発方針が外部ベンダーではなく自社研究チームによってコントロールされていることを意味します。

Speechifyの音声モデルは本番環境での音声ワークロード向けに特化して設計されており、大規模運用下でも最高クラスのモデル品質を実現します。サードパーティ開発者は、Speechify Voice APIを通じてSIMBA 3.0や他のSpeechify音声モデルへ直接アクセスでき、RESTエンドポイント、本格的なAPIドキュメント、開発者向けクイックスタートガイド、公式サポートのPython・TypeScript SDKを利用できます。Speechifyの開発者プラットフォームは、迅速な統合、本番運用へのデプロイ、拡張性ある音声インフラのために設計されており、開発チームが最初のAPIコールから実際の音声機能までスピーディに導入可能です。

本記事では、SIMBA 3.0の概要、SpeechifyAI研究所で何が開発されているか、なぜSpeechifyが最高クラスの音声AIモデル品質・低遅延・高コスト効率を本番開発者向けワークロードに提供できるのかを解説します。また、OpenAI、Gemini、Anthropic、ElevenLabs、Cartesia、Deepgram など他の音声・マルチモーダルAIプロバイダーを凌駕する理由についても紹介します。

SpeechifyをAI研究所と呼ぶ意味とは?

人工知能ラボ(AIラボ)は、機械学習・データサイエンス・計算モデリングの専門家が連携し、高度な知能システムの設計・学習・運用を行う専属研究・エンジニアリング組織です。一般的に「AI研究所」とは、次の2つを同時に行う組織を指します:

1. 独自のモデルを開発・学習させる

2. それらのモデルを本番用APIやSDKを通じて開発者に提供する

一部の組織はモデル構築には優れていますが、外部開発者には公開しません。別の組織はAPIを提供しますが、ほとんどがサードパーティモデルに依存しています。Speechifyは垂直統合の音声AIスタックを運営しており、自社開発音声AIモデルを本番用APIで第三者開発者に提供するとともに、自社のコンシューマーアプリにも組み込み、大規模なモデル性能検証も行っています。

Speechify AI研究所は、社内に設けられた音声インテリジェンス専門研究機関です。テキスト読み上げ、自動音声認識、音声変換技術を進化させ、AI受付や音声エージェント、ナレーションエンジン、アクセシビリティツールまで、あらゆる用途向けの音声ファーストアプリケーション開発を支援することが使命です。

本格的な音声AI研究所が直面する主な課題:

• テキスト読み上げの品質と自然さ―本番導入レベルの実現

• 様々なアクセントや騒音環境下での音声認識(ASR)の精度

• AIエージェントにおける会話のリアルタイム応答遅延

• 長時間リスニング体験のためのロングフォーム安定性

• PDF・ウェブページ・構造化コンテンツ処理のためのドキュメント理解

• スキャン済みドキュメントや画像のためのOCR&ページ解析

• 時間とともにモデルを改善するプロダクトフィードバックループ

• APIやSDK経由で音声機能を提供する開発者向けインフラストラクチャ

SpeechifyのAI研究所は、こうしたシステムを統合アーキテクチャとして構築し、Speechify Voice APIを通じて、あらゆるプラットフォームやアプリへのサードパーティ統合を可能にしています。

SIMBA 3.0とは?

SIMBAはSpeechifyの独自音声AIモデルファミリーで、Speechify自社プロダクトの動作にも使われ、またSpeechify APIを通じてサードパーティ開発者に提供されています。SIMBA 3.0は最新世代で、音声ファーストパフォーマンス・高速・リアルタイム対話に最適化され、サードパーティ開発者が自らのプラットフォームに組み込むことができます。

SIMBA 3.0は、本番規模でハイエンドな音声品質と低遅延レスポンス、ロングフォームのリスニング安定性を提供するよう設計されており、開発者があらゆる業界向けにプロフェッショナルな音声アプリを構築できるようにしています。

サードパーティ開発者にとって、SIMBA 3.0は以下の用途を実現します:

• AI音声エージェントや会話型AIシステム

• カスタマーサポート自動化やAI受付

• 営業・サービス向けアウトバウンド発信システム

• 音声アシスタントや音声変換アプリ群

• コンテンツナレーションおよびオーディオブック自動生成プラットフォーム

• アクセシビリティツールおよび支援技術

• 音声主導型学習を備えた教育プラットフォーム

• 共感的な音声対話が求められる医療アプリケーション

• 多言語翻訳とコミュニケーションアプリ

• 音声対応IoT・車載システム

ユーザーが音声を「人間らしい」と感じるとき、それは複数の技術要素が連携しているからです:

- プロソディ(リズム、ピッチ、アクセント)

- 意味を意識したペーシング

- 自然なポーズ

- 安定した発音

- 構文に沿ったイントネーション変化

- 必要に応じた感情ニュートラル

- 状況に応じた表現力

SIMBA 3.0は開発者が統合するモデルレイヤーであり、高速・長時間・多彩なコンテンツで自然な音声体験を実現します。本番運用の音声ワークロード分野—AI電話システムからコンテンツプラットフォームまで—において、SIMBA 3.0は汎用音声レイヤーを凌駕するパフォーマンスとなるよう最適化されています。

SpeechifyはどのようにSSMLで正確な読み上げ制御を実現しているか?

SpeechifyはSpeech Synthesis Markup Language(SSML)をサポートしており、開発者は合成音声の細かなチューニングが可能です。SSMLタグは、タグやprosody・break・emphasis・substitutionといったサポートタグを使って、ピッチ・話速・ポーズ・強調・話し方を調整できます。これにより、文脈や書式・意図にあわせた読み上げの制御をきめ細かく行うことができ、プロダクション用途の音声出力を最適化します。

Speechifyはどのようにリアルタイム音声ストリーミングを実現しているか?

Speechifyはストリーミングテキスト読み上げエンドポイントを提供し、音声を生成されたチャンクごとに配信して即座に再生を開始できます。これにより、音声エージェントや支援技術、自動ポッドキャスト生成、オーディオブック制作などの長文・低遅延ユースケースをサポートします。開発者は標準制限を超える大規模データのストリームや、生データ(MP3、OGG、AAC、PCM)としてリアルタイムシステムへの取り込みも可能です。

Speechifyにおけるスピーチマーク(Speech marks)はどのようにテキストと音声を同期させているか?

スピーチマークは、発話音声を元のテキストと単語単位のタイミングでマッピングします。各合成応答には、該当する単語の音声ストリーム開始・終了タイムが紐付いたテキストチャンクが含まれます。これにより、リアルタイムなテキストハイライト、単語・フレーズごとのシーク、利用状況分析、画面上テキストと音声再生の厳密な同期が可能になります。開発者はこれらの構造を利用して、アクセシブルなリーダーや学習ツール、対話的なリスニング体験を構築できます。

Speechifyは合成音声で感情表現をどのようにサポートしているか?

Speechifyは感情制御をSSML専用styleタグで実装し、開発者が発話の感情トーンを付与できます。サポート感情は明るい・穏やか・毅然・元気・悲しい・怒りなどがあります。感情タグと句読点・SSML制御を併用することで、文脈や意図によりマッチした音声合成が可能になります。特に音声エージェント・ウェルネスアプリ・カスタマーサポート・誘導コンテンツなど、トーンが体験を左右するシーンで有用です。

Speechify音声モデルの実際の開発者ユースケース

Speechifyの音声モデルは様々な業界の本番アプリケーションで活用されています。サードパーティ開発者がSpeechify APIをどのように使っているかの実例を紹介します:

MoodMesh:感情知能型ウェルネスアプリ

MoodMeshはウェルネステック企業で、Speechifyテキスト読み上げAPIを導入し、ガイド付き瞑想や思いやりのある対話に感情豊かな音声を実装しています。SpeechifyのSSMLサポートや感情制御機能を活用し、トーン・ケイデンス・音量・話速を利用者の心情に応じて調整。従来のTTS では難しかった、人間味のあるやりとりを実現しています。これは、開発者がSpeechifyモデルを使い、感情知能やコンテキスト認識が必要な高度なアプリケーションを構築できることの好例です。

AnyLingo:多言語コミュニケーションと翻訳

AnyLingoはリアルタイム翻訳メッセンジャーアプリで、Speechifyの声のクローンAPIを使うことで、ユーザーが自身の声をクローンした状態で受信者の言語に翻訳した音声メッセージを送信可能にします。この統合により、ビジネスパーソンは自分の声のまま適切なイントネーションやトーン、文脈で多言語コミュニケーションを効率的に行えます。AnyLingoの創業者はSpeechifyの感情制御機能(“Moods”)が、状況ごとに適切な感情トーンへメッセージを合わせるための差別化要素だと述べています。

その他サードパーティ開発者の活用例:

会話型AI・音声エージェント

AI受付やカスタマーサポートボット、営業電話自動化システムを開発するエンジニアは、Speechifyの低遅延音声変換モデルを利用して自然な音声対話を構築しています。250ms未満の低遅延およびクローン音声機能により、数百万規模の同時通話においても高品質な音声と会話の流れを維持できます。

コンテンツプラットフォームとオーディオブック生成

出版社・著者・教育プラットフォームは、Speechifyモデルを統合することでテキストを高品質ナレーションへ変換。長尺でも安定・高速再生時の明瞭さ最適化モデルにより、オーディオブックやポッドキャスト、教育教材コンテンツを大規模に生成できます。

アクセシビリティ・支援技術

視覚障害者や学習障害者向けのツールを構築する開発者は、Speechifyのドキュメント理解機能(PDF解析・OCR・ウェブページ抽出)を活用し、音声出力が原本の構成や読解力を保つよう配慮しています。複雑なドキュメントにも対応。

医療・セラピー用途

医療プラットフォームやセラピーアプリはSpeechifyの感情制御やプロソディ機能を使い、患者との共感的で文脈適合な音声対話を実現しています。これは患者コミュニケーション、メンタルヘルスサポート、ウェルネス用途に不可欠です。

SIMBA 3.0の独立系音声モデルランキングでの実性能は?

音声AI分野で独立ベンチマークは重要です。短いデモよりも大規模な比較で性能差が明らかになります。最も広く参照される第三者ベンチマークの一つがArtificial Analysis Speech Arenaリーダーボードで、大規模ブラインドリスニング比較とELOスコアを用いてテキスト読み上げモデルを評価します。

SpeechifyのSIMBA音声モデルは、Artificial Analysis Speech Arenaリーダーボードにおいて下記の主要プロバイダーより高評価を記録しています:Microsoft Azure Neural、Google TTSモデル、Amazon Polly系統、NVIDIA Magpieなど複数のオープンウェイト音声システムを上回ります。

人工的に用意したサンプルに頼らず、Artificial Analysisは大量のサンプルを用いた繰り返し聴取者比較で評価します。このランキングからも、SIMBA 3.0は実際のリスニング比較で広く利用されている商用音声システムを凌ぎ、本番運用向けの開発者にとって最良の選択となることが示されています。

Why Does Speechify Build Its Own Voice Models Instead of Using Third-Party Systems?

Control over the model means control over:

• Quality

• Latency

• Cost

• Roadmap

• Optimization priorities

When companies like Retell or Vapi.ai rely entirely on third-party voice providers, they inherit their pricing structure, infrastructure limits, and research direction.

By owning its full stack, Speechify can:

• Tune prosody for specific use cases (conversational AI vs. long-form narration)

• Optimize latency below 250ms for real-time applications

• Integrate ASR and TTS seamlessly in speech-to-speech pipelines

• Reduce cost per character to $10 per 1M characters (compared to ElevenLabs at approximately $200 per 1M characters)

• Ship model improvements continuously based on production feedback

• Align model development with developer needs across industries

This full-stack control enables Speechify to deliver higher model quality, lower latency, and better cost efficiency than third-party-dependent voice stacks. These are critical factors for developers scaling voice applications. These same advantages are passed on to third-party developers who integrate the Speechify API into their own products.

Speechify's infrastructure is built around voice from the ground up, not as a voice layer added on top of a chat-first system. Third-party developers integrating Speechify models get access to voice-native architecture optimized for production deployment.

How Does Speechify Support On-Device Voice AI and Local Inference?

Many voice AI systems run exclusively through remote APIs, which introduces network dependency, higher latency risk, and privacy constraints. Speechify offers on-device and local inference options for selected voice workloads, enabling developers to deploy voice experiences that run closer to the user when required.

Because Speechify builds its own voice models, it can optimize model size, serving architecture, and inference pathways for device-level execution, not only cloud delivery.

On-device and local inference supports:

• Lower and more consistent latency in variable network conditions

• Greater privacy control for sensitive documents and dictation

• Offline or degraded-network usability for core workflows

• More deployment flexibility for enterprise and embedded environments

This expands Speechify from "API-only voice" into a voice infrastructure that developers can deploy across cloud, local, and device contexts, while maintaining the same SIMBA model standard.

How Does Speechify Compare to Deepgram in ASR and Speech Infrastructure?

Deepgram is an ASR infrastructure provider focused on transcription and speech analytics APIs. Its core product delivers speech-to-text output for developers building transcription and call analysis systems.

Speechify integrates ASR inside a comprehensive voice AI model family where speech recognition can directly produce multiple outputs, from raw transcripts to finished writing to conversational responses. Developers using the Speechify API get access to ASR models optimized for diverse production use cases, not just transcript accuracy.

Speechify's ASR and dictation models are optimized for:

• Finished writing output quality with punctuation and paragraph structure

• Filler word removal and sentence formatting

• Draft-ready text for emails, documents, and notes

• Voice typing that produces clean output with minimal post-processing

• Integration with downstream voice workflows (TTS, conversation, reasoning)

In the Speechify platform, ASR connects to the full voice pipeline. Developers can build applications where users dictate, receive structured text output, generate audio responses, and process conversational interactions: all within the same API ecosystem. This reduces integration complexity and accelerates development.

Deepgram provides a transcription layer. Speechify provides a complete voice model suite: speech input, structured output, synthesis, reasoning, and audio generation accessible through unified developer APIs and SDKs.

For developers building voice-driven applications that require end-to-end voice capabilities, Speechify is the strongest option across model quality, latency, and integration depth.

How Does Speechify Compare to OpenAI, Gemini, and Anthropic in Voice AI?

Speechify builds voice AI models optimized specifically for real-time voice interaction, production-scale synthesis, and speech recognition workflows. Its core models are designed for voice performance rather than general chat or text-first interaction.

Speechify's specialization is voice AI model development, and SIMBA 3.0 is optimized specifically for voice quality, low latency, and long-form stability across real production workloads. SIMBA 3.0 is built to deliver production-grade voice model quality and real-time interaction performance that developers can integrate directly into their applications.

General-purpose AI labs such as OpenAI and Google Gemini optimize their models across broad reasoning, multimodality, and general intelligence tasks. Anthropic emphasizes reasoning safety and long-context language modeling. Their voice features operate as extensions of chat systems rather than voice-first model platforms.

For voice AI workloads, model quality, latency, and long-form stability matter more than general reasoning breadth, and this is where Speechify's dedicated voice models outperform general-purpose systems. Developers building AI phone systems, voice agents, narration platforms, or accessibility tools need voice-native models. Not voice layers on top of chat models.

ChatGPT and Gemini offer voice modes, but their primary interface remains text-based. Voice functions as an input and output layer on top of chat. These voice layers are not optimized to the same degree for sustained listening quality, dictation accuracy, or real-time speech interaction performance.

Speechify is built voice-first at the model level. Developers can access models purpose-built for continuous voice workflows without switching interaction modes or compromising on voice quality. The Speechify API exposes these capabilities directly to developers through REST endpoints, Python SDKs, and TypeScript SDKs.

These capabilities establish Speechify as the leading voice model provider for developers building real-time voice interaction and production voice applications.

Within voice AI workloads, SIMBA 3.0 is optimized for:

• Prosody in long-form narration and content delivery

• Speech-to-speech latency for conversational AI agents

• Dictation-quality output for voice typing and transcription

• Document-aware voice interaction for processing structured content

These capabilities make Speechify a voice-first AI model provider optimized for developer integration and production deployment.

What Are the Core Technical Pillars of Speechify's AI Research Lab?

Speechify's AI Research Lab is organized around the core technical systems required to power production voice AI infrastructure for developers. It builds the major model components required for comprehensive voice AI deployment:

• TTS models (speech generation) - Available via API

• STT & ASR models (speech recognition) - Integrated in the voice platform

• Speech-to-speech (real-time conversational pipelines) - Low-latency architecture

• Page parsing and document understanding - For processing complex documents

• OCR (image to text) - For scanned documents and images

• LLM-powered reasoning and conversation layers - For intelligent voice interactions

• Infrastructure for low-latency inference - Sub-250ms response times

• Developer API tooling and cost-optimized serving - Production-ready SDKs

Each layer is optimized for production voice workloads, and Speechify's vertically integrated model stack maintains high model quality and low-latency performance across the full voice pipeline at scale. Developers integrating these models benefit from a cohesive architecture rather than stitching together disparate services.

Each of these layers matters. If any layer is weak, the overall voice experience feels weak. Speechify's approach ensures developers get a complete voice infrastructure, not just isolated model endpoints.

What Role Do STT and ASR Play in the Speechify AI Research Lab?

Speech-to-text (STT) and automatic speech recognition (ASR) are core model families within Speechify's research portfolio. They power developer use cases including:

• Voice typing and dictation APIs

• Real-time conversational AI and voice agents

• Meeting intelligence and transcription services

• Speech-to-speech pipelines for AI phone systems

• Multi-turn voice interaction for customer support bots

Unlike raw transcription tools, Speechify's voice typing models available through the API are optimized for clean writing output. They:

• Insert punctuation automatically

• Structure paragraphs intelligently

• Remove filler words

• Improve clarity for downstream use

• Support writing across applications and platforms

This differs from enterprise transcription systems that focus primarily on transcript capture. Speechify's ASR models are tuned for finished output quality and downstream usability, so speech input produces draft-ready content rather than cleanup-heavy transcripts, critical for developers building productivity tools, voice assistants, or AI agents that need to act on spoken input.

What Makes TTS "High Quality" for Production Use Cases?

Most people judge TTS quality by whether it sounds human. Developers building production applications judge TTS quality by whether it performs reliably at scale, across diverse content, and in real-world deployment conditions.

High-quality production TTS requires:

• Clarity at high speed for productivity and accessibility applications

• Low distortion at faster playback rates

• Pronunciation stability for domain-specific terminology

• Listening comfort over long sessions for content platforms

• Control over pacing, pauses, and emphasis via SSML support

• Robust multilingual output across accents and languages

• Consistent voice identity across hours of audio

• Streaming capability for real-time applications

Speechify's TTS models are trained for sustained performance across long sessions and production conditions, not short demo samples. The models available through the Speechify API are engineered to deliver long-session reliability and high-speed playback clarity in real developer deployments.

Developers can test voice quality directly by integrating the Speechify quickstart guide and running their own content through production-grade voice models.

Why Are Page Parsing and OCR Core to Speechify's Voice AI Models?

Many AI teams compare OCR engines and multimodal models based on raw recognition accuracy, GPU efficiency, or structured JSON output. Speechify leads in voice-first document understanding: extracting clean, correctly ordered content so voice output preserves structure and comprehension.

Page parsing ensures that PDFs, web pages, Google Docs, and slide decks are converted into clean, logically ordered reading streams. Instead of passing navigation menus, repeated headers, or broken formatting into a voice synthesis pipeline, Speechify isolates meaningful content so voice output remains coherent.

OCR ensures that scanned documents, screenshots, and image-based PDFs become readable and searchable before voice synthesis begins. Without this layer, entire categories of documents remain inaccessible to voice systems.

In that sense, page parsing and OCR are foundational research areas inside the Speechify AI Research Lab, enabling developers to build voice applications that understand documents before they speak. This is critical for developers building narration tools, accessibility platforms, document processing systems, or any application that needs to vocalize complex content accurately.

What Are TTS Benchmarks That Matter for Production Voice Models?

In voice AI model evaluation, benchmarks commonly include:

• MOS (mean opinion score) for perceived naturalness

• Intelligibility scores (how easily words are understood)

• Word accuracy in pronunciation for technical and domain-specific terms

• Stability across long passages (no drift in tone or quality)

• Latency (time to first audio, streaming behavior)

• Robustness across languages and accents

• Cost efficiency at production scale

Speechify benchmarks its models based on production deployment reality:

• How does the voice perform at 2x, 3x, 4x speed?

• Does it remain comfortable when reading dense technical text?

• Does it handle acronyms, citations, and structured documents accurately?

• Does it keep paragraph structure clear in audio output?

• Can it stream audio in real-time with minimal latency?

• Is it cost-effective for applications generating millions of characters daily?

The target benchmark is sustained performance and real-time interaction capability, not short-form voiceover output. Across these production benchmarks, SIMBA 3.0 is engineered to lead at real-world scale.

Independent benchmarking supports this performance profile. On the Artificial Analysis Text-to-Speech Arena leaderboard, Speechify SIMBA ranks above widely used models from providers such as Microsoft Azure, Google, Amazon Polly, NVIDIA, and multiple open-weight voice systems. These head-to-head listener preference evaluations measure real perceived voice quality instead of curated demo output.

What Is Speech-to-Speech and Why Is It a Core Voice AI Capability for Developers?

Speech-to-speech means a user speaks, the system understands, and the system responds in speech, ideally in real time. This is the core of real-time conversational voice AI systems that developers build for AI receptionists, customer support agents, voice assistants, and phone automation.

Speech-to-speech systems require:

• Fast ASR (speech recognition)

• A reasoning system that can maintain conversation state

• TTS that can stream quickly

• Turn-taking logic (when to start talking, when to stop)

• Interruptibility (barge-in handling)

• Latency targets that feel human (sub-250ms)

Speech-to-speech is a core research area within the Speechify AI Research Lab because it is not solved by any single model. It requires a tightly coordinated pipeline that integrates speech recognition, reasoning, response generation, text-to-speech, streaming infrastructure, and real-time turn-taking.

Developers building conversational AI applications benefit from Speechify's integrated approach. Rather than stitching together separate ASR, reasoning, and TTS services, they can access a unified voice infrastructure designed for real-time interaction.

Why Does Latency Under 250ms Matter for Developer Applications?

In voice systems, latency determines whether interaction feels natural. Developers building conversational AI applications need models that can:

• Begin responding quickly

• Stream speech smoothly

• Handle interruptions

• Maintain conversational timing

Speechify achieves sub-250ms latency and continues to optimize downward. Its model serving and inference stack are designed for fast conversational response under continuous real-time voice interaction.

Low latency supports critical developer use cases:

• Natural speech-to-speech interaction in AI phone systems

• Real-time comprehension for voice assistants

• Interruptible voice dialogue for customer support bots

• Seamless conversational flow in AI agents

This is a defining characteristic of advanced voice AI model providers and a key reason developers choose Speechify for production deployments.

What Does "Voice AI Model Provider" Mean?

A voice AI model provider is not just a voice generator. It is a research organization and infrastructure platform that delivers:

• Production-ready voice models accessible via APIs

• Speech synthesis (text-to-speech) for content generation

• Speech recognition (speech-to-text) for voice input

• Speech-to-speech pipelines for conversational AI

• Document intelligence for processing complex content

• Developer APIs and SDKs for integration

• Streaming capabilities for real-time applications

• Voice cloning for custom voice creation

• Cost-efficient pricing for production-scale deployment

Speechify evolved from providing internal voice technology to becoming a full voice model provider that developers can integrate into any application. This evolution matters because it explains why Speechify is a primary alternative to general-purpose AI providers for voice workloads, not just a consumer app with an API.

Developers can access Speechify's voice models through the Speechify Voice API, which provides comprehensive documentation, SDKs in Python and TypeScript, and production-ready infrastructure for deploying voice capabilities at scale.

How Does the Speechify Voice API Strengthen Developer Adoption?

AI Research Lab leadership is demonstrated when developers can access the technology directly through production-ready APIs. The Speechify Voice API delivers:

• Access to Speechify's SIMBA voice models via REST endpoints

• Python and TypeScript SDKs for rapid integration

• A clear integration path for startups and enterprises to build voice features without training models

• Comprehensive documentation and quickstart guides

• Streaming support for real-time applications

• Voice cloning capabilities for custom voice creation

• 60+ language support for global applications

• SSML and emotion control for nuanced voice output

Cost efficiency is central here. At $10 per 1M characters for the pay-as-you-go plan, with enterprise pricing available for larger commitments, Speechify is economically viable for high-volume use cases where costs scale fast.

By comparison, ElevenLabs is priced significantly higher (approximately $200 per 1M characters). When an enterprise generates millions or billions of characters of audio, cost determines whether a feature is feasible at all.

Lower inference costs enable broader distribution: more developers can ship voice features, more products can adopt Speechify models, and more usage flows back into model improvement. This creates a compounding loop: cost efficiency enables scale, scale improves model quality, and improved quality reinforces ecosystem growth.

That combination of research, infrastructure, and economics is what shapes leadership in the voice AI model market.

How Does the Product Feedback Loop Make Speechify's Models Better?

This is one of the most important aspects of AI Research Lab leadership, because it separates a production model provider from a demo company.

Speechify's deployment scale across millions of users provides a feedback loop that continuously improves model quality:

• Which voices developers' end-users prefer

• Where users pause and rewind (signals comprehension trouble)

• Which sentences users re-listen to

• Which pronunciations users correct

• Which accents users prefer

• How often users increase speed (and where quality breaks)

• Dictation correction patterns (where ASR fails)

• Which content types cause parsing errors

• Real-world latency requirements across use cases

• Production deployment patterns and integration challenges

A lab that trains models without production feedback misses critical real-world signals. Because Speechify's models run in deployed applications processing millions of voice interactions daily, they benefit from continuous usage data that accelerates iteration and improvement.

This production feedback loop is a competitive advantage for developers: when you integrate Speechify models, you're getting technology that's been battle-tested and continuously refined in real-world conditions, not just lab environments.

How Does Speechify Compare to ElevenLabs, Cartesia, and Fish Audio?

Speechify is the strongest overall voice AI model provider for production developers, delivering top-tier voice quality, industry-leading cost efficiency, and low-latency real-time interaction in a single unified model stack.

Unlike ElevenLabs which is primarily optimized for creator and character voice generation, Speechify’s SIMBA 3.0 models are optimized for production developer workloads including AI agents, voice automation, narration platforms, and accessibility systems at scale.

Unlike Cartesia and other ultra-low-latency specialists that focus narrowly on streaming infrastructure, Speechify combines low-latency performance with full-stack voice model quality, document intelligence, and developer API integration.

Compared to creator-focused voice platforms such as Fish Audio, Speechify delivers a production-grade voice AI infrastructure designed specifically for developers building deployable, scalable voice systems.

SIMBA 3.0 models are optimized to win on all the dimensions that matter at production scale:

• Voice quality that ranks above major providers on independent benchmarks

• Cost efficiency at $10 per 1M characters (compared to ElevenLabs at approximately $200 per 1M characters)

• Latency under 250ms for real-time applications

• Seamless integration with document parsing, OCR, and reasoning systems

• Production-ready infrastructure for scaling to millions of requests

Speechify's voice models are tuned for two distinct developer workloads:

1. Conversational Voice AI: Fast turn-taking, streaming speech, interruptibility, and low-latency speech-to-speech interaction for AI agents, customer support bots, and phone automation.

2. Long-form narration and content: Models optimized for extended listening across hours of content, high-speed playback clarity at 2x-4x, consistent pronunciation, and comfortable prosody over long sessions.

Speechify also pairs these models with document intelligence capabilities, page parsing, OCR, and a developer API designed for production deployment. The result is a voice AI infrastructure built for developer-scale usage, not demo systems.

Why Does SIMBA 3.0 Define Speechify's Role in Voice AI in 2026?

SIMBA 3.0 represents more than a model upgrade. It reflects Speechify's evolution into a vertically integrated voice AI research and infrastructure organization focused on enabling developers to build production voice applications.

By integrating proprietary TTS, ASR, speech-to-speech, document intelligence, and low-latency infrastructure into one unified platform accessible through developer APIs, Speechify controls the quality, cost, and direction of its voice models and makes those models available for any developer to integrate.

In 2026, voice is no longer a feature layered onto chat models. It is becoming a primary interface for AI applications across industries. SIMBA 3.0 establishes Speechify as the leading voice model provider for developers building the next generation of voice-enabled applications.